Definition

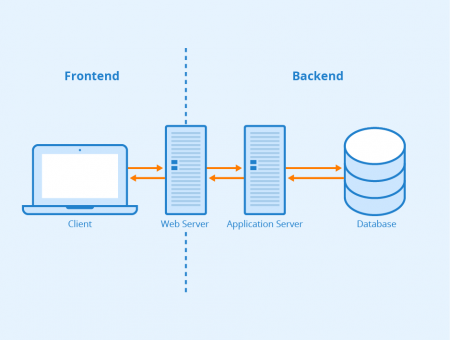

Frontend and backend are two terms used in software development. The frontend covers everything the user of a software or website can see, touch, and experience. On websites, the frontend includes content (posts, pages, media, and comments), design, and navigation. Another example would be the frontend of database software, where users can enter and display data.

Distinguishing frontend from backend

“Backend” refers to everything users of a software or website cannot see. This includes, for example, servers that host websites. A database that stores user input data or website content can also be assigned to the back end. In case of a website, the internet connects frontend and backend. In content management systems, the terms frontend and backend can refer to the end-user interface of the CMS and the administration area. Authentication and authorization take place in the backend.

What makes an SEO-friendly frontend?

Users who can easily navigate through a website tend to stay longer and rarely abort their visit. This is important for the ranking of your website because dwell times and bounce rates are important evaluation criteria for Google. Below we explain a few factors that contribute to an SEO-friendly frontend and thus influence your website’s usability and ranking on Google.

Clean and semantic HTML code

Clean and semantically flawless HTML code is the basic requirement for an SEO-friendly frontend. Errors in your HTML code can be the reason why search engine robots cannot crawl a page and therefore cannot index it.

Avoid framesets and Flash content

Framesets and Flash content is deprecated and should no longer be used for a website today.

Page loading speed

Visitors often leave a website very quickly if it is not loading fast enough. Three seconds is usually the maximum acceptable loading time with 200ms being the optimal speed. For mobile websites, a fast display of “above the fold” content plays an important role. Webmasters can test the page speed of their website with the free tool “PageSpeed Insights” from Google.

Responsive Design

In addition to loading speed, Responsive Design also plays an important role when it comes to good frontend. Especially in times of mobile first, consumers use devices with different sizes and displays. Therefore, websites and images should always be designed in such a way that they are optimally suited for all viewports. This not only helps (mobile) users but also makes it easier for search engine bots to crawl a website.

Structured data and accessibility

Good markup and structured data – just like Responsive Web Design – help search engine crawlers navigate and index pages. In addition, structured data enables the display of star ratings, prices, recipes, and rich snippets in the SERPs. Responsive snippets, in turn, can positively influence the click-through rate.

Not only search engines benefit from structured data and optimized markup, but such optimizations are also important for impaired persons. Tools such as screen readers work similar to crawlers and use this structured data to describe the content of a website. In this context, alt and title attributes of images and links also play an important role and should not be missing.

For websites with many subpages or online shops with numerous categories and subcategories, breadcrumb navigation can make orientation easier for visitors. Breadcrumb navigation is an additional navigation scheme that is added at the top of a page in frontend design. This has the advantage that users always know their current location on a website. In addition, they can switch to a higher-level or already visited page with just one click, without having to use the back button in their browser or start over at the top navigation level.

Internal linking

Connecting a website’s pages with internal links helps search engines understand the structure of a website and capture all its subpages. Internal links also make it easier for users to find additional information, which increases their dwell time on your site. If you want to prevent search engine bots from following certain links, you can add nofollow tags to these links.

Search engine friendly URLs

When it comes to frontend design, people often forget that not only search engines crawl a web page’s URL, but users also read it. This is why you should keep URLs short, descriptive and easy to read. Avoid special characters such as underscores, ampersands, and percent signs. The easier it is to read a URL, the more positive it will be in terms of both usability and search engine optimization.

Related links

- https://skillcrush.com/2016/02/11/skills-to-become-a-front-end-developer/

- https://www.differencebetween.net/technology/difference-between-frontend-and-backend/

Similar articles