Definition

Server response time is the time between a client’s request to a server and for that request to be fulfilled. A chain of events happens when a user accesses a website, and how long a server takes to respond can have a large effect on the user experience. A lower response time is almost always better, as long response times can lead to user frustration and higher bounce rates.

Response time in practice

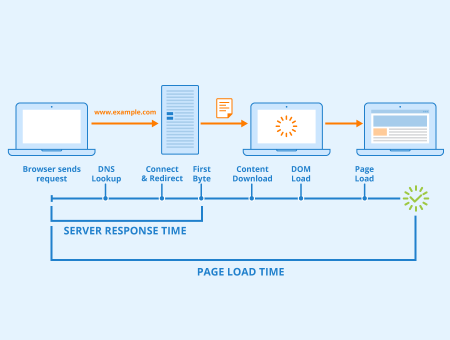

When a user first accesses a website, a DNS lookup occurs. This establishes a connection to a DNS server, which matches a domain name to its corresponding IP address. An HTTP request is then made to that IP address, which may include redirects, depending on how the server is configured. The server then sends across data. The time between the user accessing a website and the first byte being sent by the server is called time to first byte (TTFB). TTFB does not account for how much data must be sent, but only with how long it takes to send back the first byte. Another metric to track response times is the time to last byte (TTLB), which describes how long it takes for the last byte to be sent by the server.

A similar concept to response time is page load time. While the former describes the time until a response is received, the latter measures how long it takes to display an entire webpage. These terms are sometimes used interchangeably, and while response time does influence page load times, they are not the same.

TTFB can vary wildly depending on a number of factors, such as any heavy server-side processing that needs to be done before data can be sent back, to the physical distance between a client and server.

Improving response times

Since response times have a direct impact on your page load times, they should be as low as possible. Ways to improve the TTFB include:

Choosing the right server

By choosing a server that is fast enough, scales up to your needs, and can handle the expected traffic, you can already improve response times. Additionally, you should make sure that databases are optimized.

Using a content delivery network

A CDN allows for data to be spread across a global network, and each client request may be routed to the server that is physically closest to the user, further reducing response times.

Enabling caching

Sometimes operations need to be run on data, or outside services are accessed as part of the server’s response. Rather than process these requests every time, instead the results should be cached. This essentially saves a copy of the data and returns that copy when it is next requested. For how long a cached copy is displayed to users is entirely configurable.

Using HTTP/2

The protocol that underpins how data is requested and retrieved on the web via web browsers is called the HyperText Transfer Protocol (HTTP). An HTTP request is made each time a different file is requested (an image, a CSS file, an HTML file, a Javascript file, etc) and each one must be loaded individually. This means websites and services comprised of many files can take a lot longer to load and display than more streamlined content.

HTTP/2 is an improvement on the original protocol. Among other additions, it allows for multiple files to be requested simultaneously. This leads to better usage of available bandwidth and reduces response times. However, HTTP/2 is still in relative infancy and is not yet widely adopted.

Importance for SEO

It is almost always beneficial to reduce response times by as much as possible, both from an SEO perspective and a user experience perspective, because it can have a direct impact on page load times. It is well documented that the longer a page takes to load, the higher the user bounce rate is likely to be. A service that feels slow to an end-user is more likely to be dropped in favor of one that is fast and feels responsive.

There are many services and techniques that can be used to help reduce server response time. These range from using CDN and cloud services for sending data from the closest physical server location to the user, to caching data to help speed up the TTFB. The end-user will understand the loading time as how long it takes to display a webpage, but there are a lot of factors behind the scenes that contribute to this.

Related links

- How fast should your website load?

- 13 tips for optimizing your server and infrastructure for better performance

Similar articles