What is deepbot?

Deepbot is an old term used for one of the crawlers Google used for crawling websites. Back in the early 2000’s, two different crawlers were identified by SEOs and webmasters. Deepbot was one of these and it focused on crawling deep into your site by following the links available to it. The other crawler was coined ‘freshbot’, which focused on changes to pages, as well as new pages & resources. Since then, Google has made countless updates to its crawlers, continually looking to improve its crawl rate and efficiency in order to grow its index and provide better search results. This has led to the term deepbot being used far less than when it was first discovered.

How did deepbot work?

Deepbot was the name given to one of Google’s crawlers. Crawlers, also called bots or spiders, are pieces of software that navigate and document websites. They move from one page to another by following links, creating what can be seen as a snapshot of the page it’s on before moving to the next one.

It was thought that Deepbot worked by following links on the different pages on your site. This theory seemed to be confirmed by webmasters by running a reverse DNS lookup along with checking server logs, allowing them to determine whether it was actually a crawler from Google, as well as allowing them to see which pages the bot had visited. It was thought that Deepbot focused on crawling as many pages of your site as possible, without taking things like freshness into account.

Deepbot vs freshbot

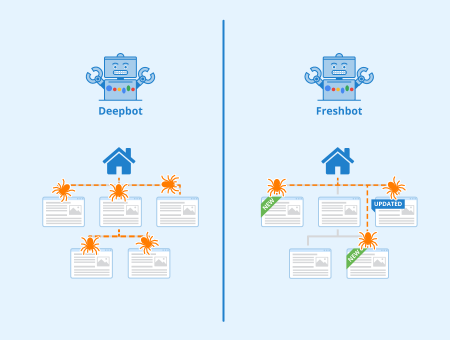

Both deepbot and freshbot were bots used by Google to crawl websites, however, there were some large differences observed in how they behaved, which lead many SEO’s and webmasters to distinguish between the two.

Deepbot

Deepbot was a bot that was used by Google to crawl an entire website. It would go through the site and follow all links it could find in order to create a complete overview of the site’s architecture.

Freshbot

Freshbot was a bot that Google used which placed a lot more emphasis on freshness. It looked for fresh content and could be observed crawling sites more or less frequently based on how often new content was added to the site.

Current day

The terms ‘freshbot’ and ‘deepbot’ aren’t used much at all in the SEO community anymore. Google has made many changes to how they crawl and index web pages since 2002/2003, when deepbot and freshbot were used more often. There are currently a few different Google bots that have been confirmed by Google, mainly focusing on the different search engines it maintains.

Screenshot of a section of the table displayed on developers.google.com which provides more information on some of the main crawlers Google currently uses.

Relevance to SEO

The way Google crawls your website is an important point to consider when practicing SEO. Since crawling is an important part of indexing, understanding how Google’s crawlers work and optimizing your site for them can help your SEO efforts. Although not used anymore, optimizing your website for both deepbot and freshbot were important parts of SEO back in the early 2000s.

Nowadays, optimizing your website for Google’s crawlers is still important, although the methods used for this have changed over time. Page speed, content freshness, backlinks, and various other factors are often mentioned as the top SEO factors among SEO professionals when it comes to optimizing for search engine crawlers. This in turn helps your website be discovered by search engines as well as in being indexed.

Related links

- https://www.webmasterworld.com/forum3/11945.htm

- https://developers.google.com/search/docs/advanced/crawling/overview-google-crawlers

Similar articles